Layer 1: CAPTCHA Passed the Session. Here Is What It Did Not Catch.

This is Post 2 in the Identifier Trust Layers series by Opportify, a framework that maps where trust can be evaluated across the full user lifecycle, and where the gaps are. Each post covers one layer in depth.

The Identifier Trust Layers is Opportify's original framework. It is not an industry standard or third-party model.

Your form sees everything. Most fraud stacks see nothing until after the submission lands.

Every form submission is the end of a session: a device, a network connection, a series of interactions with a page. Before the data arrives in your CRM or submission queue, a user loaded your page, moved their mouse, focused on fields, typed characters, corrected typos, scrolled, paused, and clicked submit. All of that happened. Most teams discard every signal from it.

What arrives in your pipeline is a record - a name, an email address, a phone number, an IP. The session that produced it is gone, unexamined. And the session is often the most informative part.

This is Layer 1 in Opportify's Identifier Trust Layers framework: Interaction and Session Intelligence. It is the first layer where signals from actual human behavior become available for evaluation, and it is also where the most widely deployed fraud protection mechanism - CAPTCHA - both operates and falls significantly short.

Your Form Sees Everything. You See Nothing.

The network layer (Layer 0 in Opportify's Identifier Trust Layers framework) evaluates risk before any interaction occurs. It sees infrastructure: IP reputation, traffic patterns, known threat signatures. Once a request passes the network layer and a user loads your page, an entirely new category of evidence becomes available - and, for most teams, it goes completely unread.

From the moment your page loads, a session accumulates signals. The device characteristics of the browser making the request. The timing between page load and first field focus. Whether cursor movement looks like a person exploring a form or a script executing a sequence. How quickly characters appear in each field. Whether a field was tabbed into or clicked. Whether the form was completed in a continuous flow or paused at certain points. Whether anything was corrected.

None of these signals are visible in the submission record. They exist only in the session. When the form submits, the session has already ended - and everything that happened inside it, everything that might have flagged this submission as anomalous, was either captured during the session or lost permanently.

The consequence is stark: a team that only evaluates submitted records is always working from incomplete evidence. The session is not supplementary context. In many fraud cases, it is the primary signal.

Layer 1 is where that evidence lives. It is also where CAPTCHA operates, which makes CAPTCHA the natural starting point for understanding this layer - both what it covers and what it does not.

CAPTCHA Limitations: What CAPTCHA Fails to Catch

CAPTCHA belongs at the interaction layer because it requires a user to be present. Unlike IP blocklisting or WAF rules, which evaluate the network envelope before any page is loaded, CAPTCHA demands that a user load the page and respond to a challenge. That challenge-response structure is precisely what places CAPTCHA in Layer 1.

The original insight behind CAPTCHA was correct: some tasks are easy for humans and hard for automated scripts. Ask a user to identify fire hydrants in a grid of images, and a script that does not have visual recognition capabilities will fail. Require a checkbox interaction that produces a convincing mouse movement pattern, and scripted browsers running in a loop will produce obviously mechanical responses.

Classic CAPTCHA (the reCAPTCHA v2 checkbox, image grid puzzles) challenges users with tasks that rely on human visual perception. The assumption is that a script cannot solve what requires vision. That assumption held for years, and then it stopped holding.

Behavioral CAPTCHA - reCAPTCHA v3, Cloudflare Turnstile, and similar systems - moved to a different model. Instead of asking users to solve a puzzle, these systems passively observe the session: mouse movement entropy, scroll velocity, timing patterns, and other behavioral fingerprints that tend to differ between human users and automation. A risk score is produced. If the score is low, no challenge is shown. If the score is high, a friction challenge is triggered. This is a meaningful architectural improvement: it reduced friction for legitimate users while maintaining signal collection.

What CAPTCHA genuinely catches is important to understand. Unsophisticated scripted bots executing form submissions in a simple loop produce unmistakable automation signatures. A headless browser with no cursor movement, instant field population, and a tab-order form completion in under a second will score poorly on behavioral CAPTCHA and fail a classic challenge. This is real coverage. It is also the coverage that has the narrowest application to modern fraud.

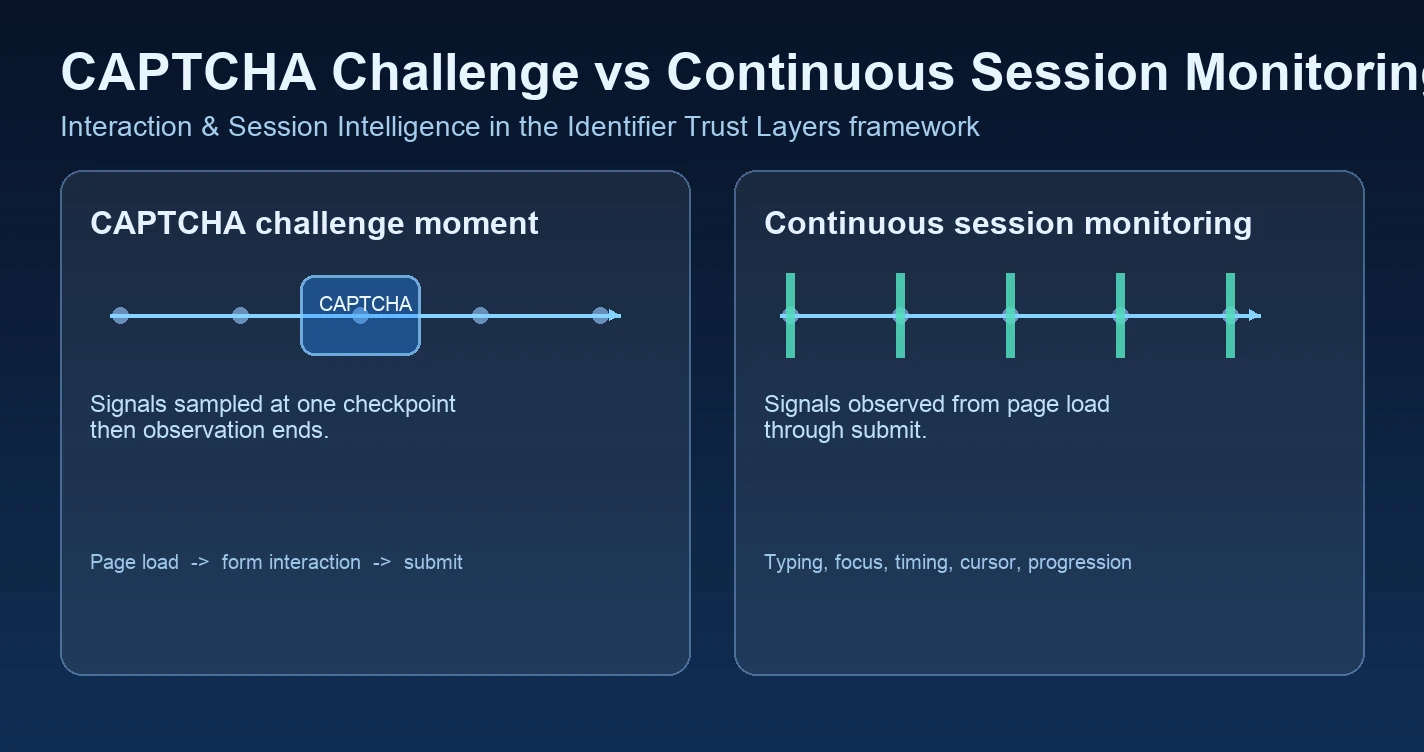

The problem is structural. CAPTCHA evaluates a checkpoint in the session, typically the challenge moment. Before it, the session may have produced dozens of behavioral signals. After it, the session continues. In many real-world implementations, active scoring does not continue across the full session window. The challenge is a gate, and once that gate passes, the remaining behavior often goes unexamined.

That creates a precise ceiling on what CAPTCHA can catch. A sophisticated bot that generates convincing mouse movement entropy, pauses appropriately, and modulates its speed through the challenge will score well on behavioral CAPTCHA - not because it is not a bot, but because it was specifically designed to produce the signals that CAPTCHA scores on. Human-assisted operations pass every CAPTCHA variant because a real human is producing the interaction. Low-and-slow automation, tuned to replicate plausible human timing, produces behavioral signals that fall within the range CAPTCHA considers acceptable.

The gap is not a flaw in CAPTCHA's implementation. It is a consequence of what CAPTCHA was designed to do: prevent simple automation from reaching a form. That purpose has become narrower as automation has become more sophisticated. What CAPTCHA misses is not an edge case. It is a large category of modern fraud.

How Behavioral Fraud Detection Works in Sessions

Where CAPTCHA evaluates a moment, continuous session intelligence monitors the entire window from page load to form submission. The behavioral evidence it produces is substantially richer than anything a single challenge-response can surface.

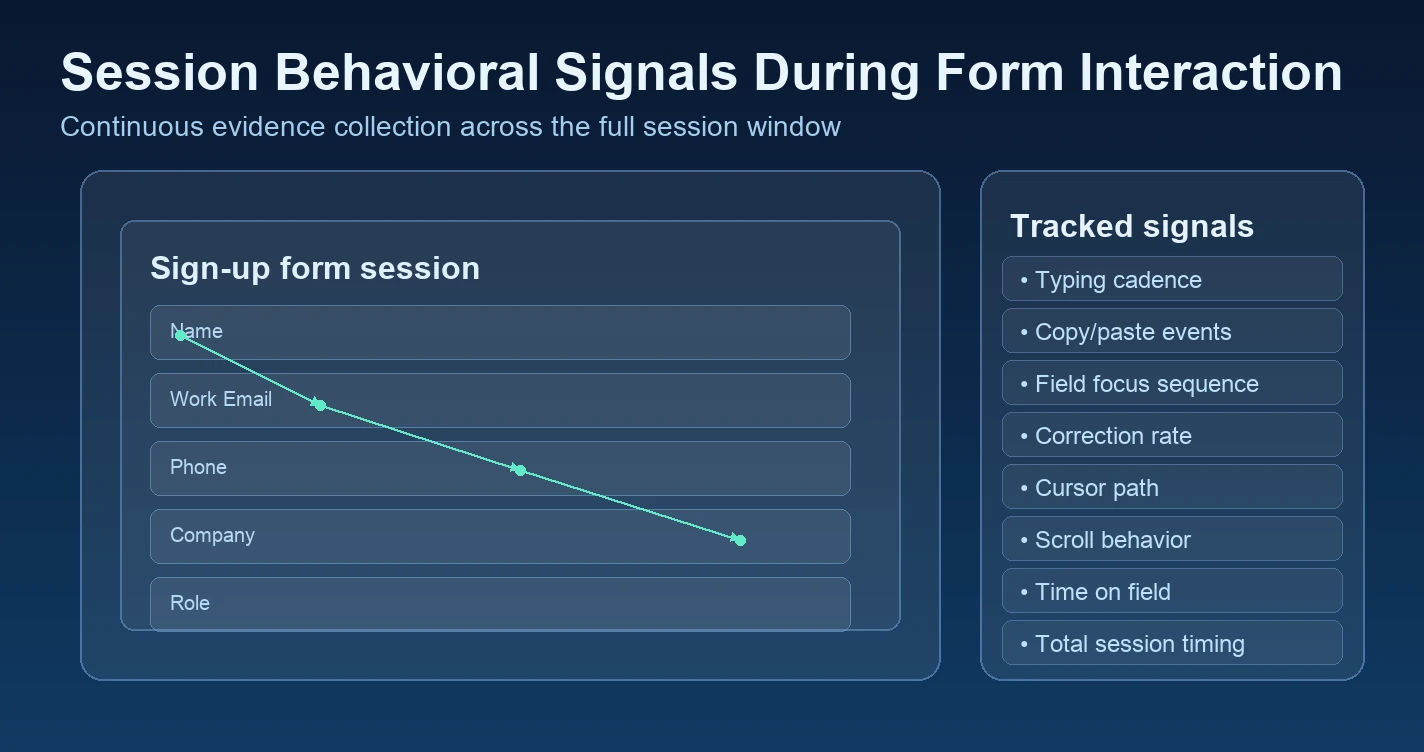

Typing cadence is one of the most discriminating signals in the session. Real users type at variable speeds, with pauses between words, occasional hesitations when recalling information, brief bursts when entering familiar strings like an email address they have typed many times. They make errors. They backspace. The character-by-character timing of a natural typing pattern is irregular in ways that are characteristic of human cognition and motor control.

Automated form completion produces a different pattern. Fields populate at uniform intervals, or instantly, or at a rate that reflects a scripted delay configured to look plausible. The variance is wrong - too consistent for a human, too precise to be the output of genuine recall. The absence of corrections is particularly diagnostic: real users correct themselves; scripts produce the final value directly.

Copy-paste behavior is another high-signal indicator. Genuine users occasionally paste a value - an email address from a password manager, a long company name - but they rarely paste an entire form's worth of data in sequence. A session in which every field appears to have been pasted rather than typed, especially within a very short total completion time, is an anomaly worth examining. The same applies to fields that populate through browser autofill at a legitimate pace but with no preceding cursor movement to the field.

Micro-interactions across the session provide context that goes beyond typing. Where does the cursor go between fields? Does the user scroll to review what they have entered? Do they focus a field, look away (producing a pause), and then return? Does mouse movement follow a plausible path between form elements, or does it jump in straight lines at angles that reflect programmatic positioning? These signals are individually weak but cumulatively diagnostic.

Form progression anomalies combine several of these signals into patterns. A form completed with no field revisits, no hesitations, no scroll events, and a perfectly linear tab-order traversal at consistent intervals is producing a pattern that is statistically rare in genuine user sessions. Real users do not move through forms like a state machine executing a sequence. They look around. They hesitate. They change their minds.

Session timing is perhaps the most blunt of the available signals, but it is also among the most reliable. A page that was loaded and submitted in 400 milliseconds, with no detectable interaction gap, was not filled by a human being. A form that took 38 seconds to complete, with all 38 seconds occurring in the first field and then zero time on the remaining five fields, is not consistent with how people fill out forms. Timing anomalies of this kind are exactly the signal that continuous session monitoring is positioned to surface - and exactly the signal that CAPTCHA would not capture because the challenge might have occurred partway through and produced a reasonable score.

What the Device Fingerprint Layer Adds

Session behavior describes how an interaction happened. Device fingerprinting describes what device produced it - and, critically, whether that device has been seen before.

A device fingerprint is a composite identifier assembled from attributes that browsers expose: user agent string, operating system, screen resolution, color depth, language settings, timezone, installed fonts, WebGL renderer, GPU model identifier, hardware concurrency count, available memory category, canvas rendering characteristics, and audio context properties. Individually, none of these attributes is unique. Taken together, the combination produces a fingerprint that is stable across sessions and reliably distinguishes devices.

The immediate application is headless browser detection. A browser launched by a script - whether that script is running Puppeteer, Playwright, Selenium, or a custom automation framework - produces a fingerprint with characteristic signatures. The GPU identifier may report an emulated renderer. The installed font list may be minimal or reflect a container image rather than a real operating system. Audio context behavior may indicate a sandboxed environment. Certain browser APIs may be absent or return stub values. These signatures are not always individually conclusive, but in combination they often distinguish a script-controlled browser from a real user session with reasonable confidence.

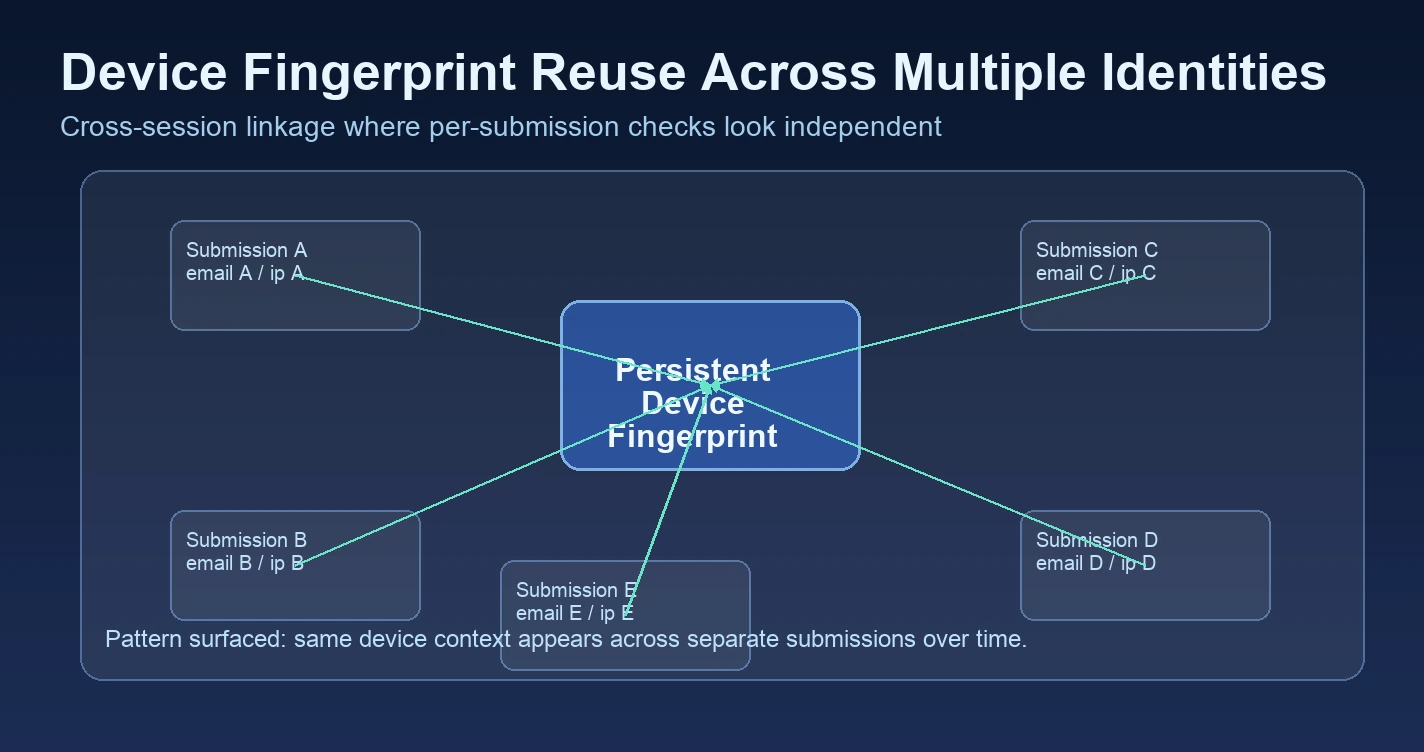

More consequential than per-session detection is device fingerprint reuse across identities. A single device appearing across multiple submitted identities over hours or days is a pattern that email checking, IP checking, and CAPTCHA are individually blind to. The email addresses may all be different. The IPs may rotate through residential proxies. The CAPTCHA challenge may have been passed on each submission. But the device fingerprint connecting them is the same. The device is the consistent identifier that none of the other checks can see.

This is particularly relevant for multi-account creation patterns. Trial abuse via identity rotation - submitting with different emails and IPs but from the same device - is a class of fraud that is structurally invisible to per-submission checks unless those checks include a fingerprint component. The fingerprint is the thread that connects what would otherwise look like independent, unrelated submissions.

Network fingerprinting adds a coarser but persistent supplementary identifier. An IP-prefix-based fingerprint persists across IP rotation within the same ISP or carrier range. Where a specific IP address changes with every request, the network context (the ISP, the ASN, the regional prefix) may remain consistent. This is not a precise identifier, but it provides corroborating context when evaluating whether submissions that appear to come from different IPs are actually originating from the same network environment.

The Threats That Only This Layer Exposes

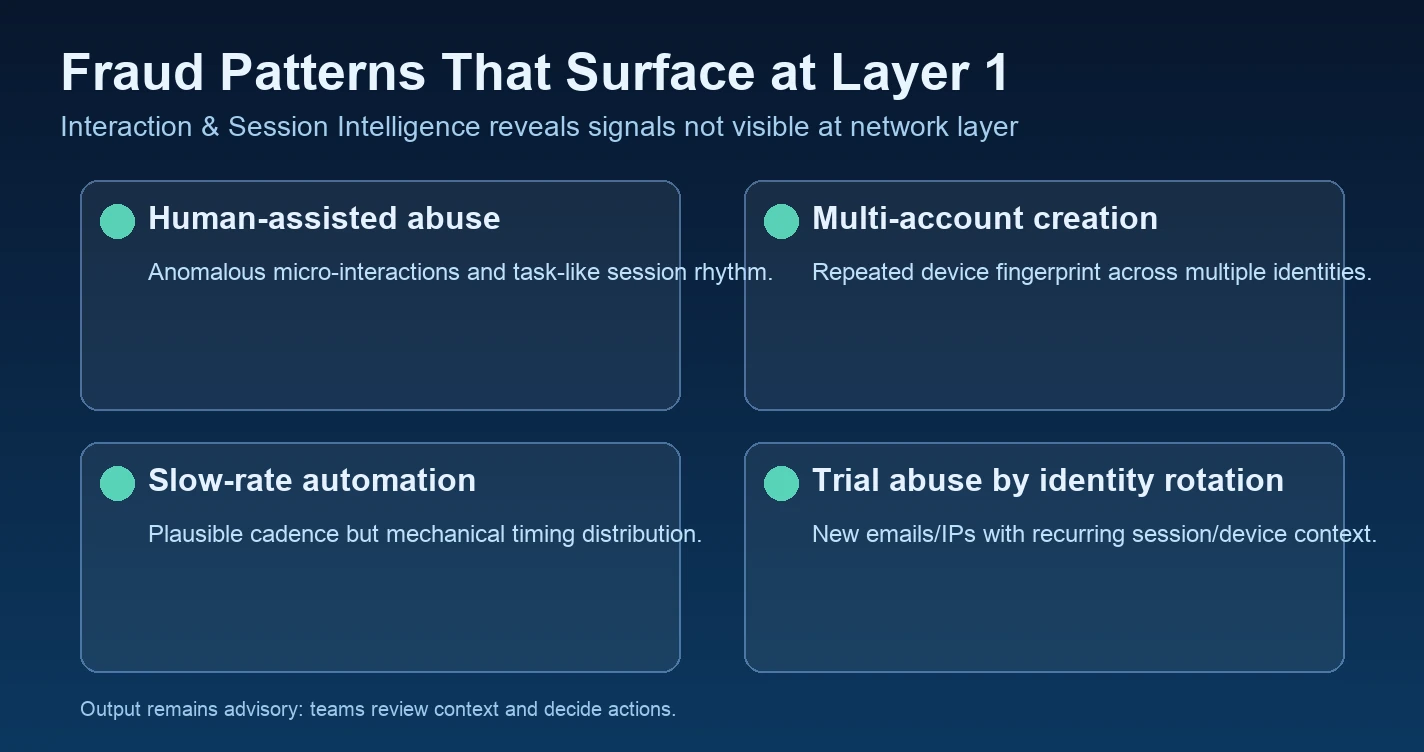

Understanding Layer 1's coverage means understanding the specific fraud categories that are invisible to the network layer but detectable through behavioral and device signals. These are not theoretical attack types. They represent the bulk of modern abuse reaching sign-up flows, trial pipelines, and onboarding forms.

Human-assisted abuse is the hardest category to catch and the most important to understand. In these operations, a real human being sits at a real device on a real residential network and fills out forms with fabricated identities. They pass every network check because their traffic is indistinguishable from legitimate users at the network level - it is produced by actual humans. They often pass CAPTCHA for the same reason. But they do not fill forms the way legitimate users do. The cognitive patterns are different. They are working through a list, not genuinely registering for a service. Form behavior can sometimes reflect this: faster-than-typical completion for the identity fields they are familiar with, minimal exploration of the page, inconsistent micro-interaction patterns, and timing that reflects task-oriented completion rather than genuine interest. The more disciplined the operation, the harder these signals are to surface - but even trained operators rarely reproduce the full behavioral richness of genuine sign-up intent.

Multi-account creation exploits the gap between per-submission checks. Each submission looks independent: a new email address, a fresh IP, a passed CAPTCHA. But the device fingerprint connecting them is constant. Without Layer 1's device fingerprint component, a team evaluating only the submitted records has no way to recognize that what appear to be ten independent sign-ups are actually ten submissions from the same device in the same afternoon.

Slow-rate automation is specifically calibrated to evade rate-limiting and detection. A script configured to submit one form every few minutes, from different IPs, with plausible-looking delays, will never cross a rate-limit threshold. What it cannot do is produce the full behavioral richness of a genuine human session. Typing cadence is wrong. Micro-interaction patterns are absent or mechanical. Session timing has the wrong distribution. These signals remain detectable even when submission frequency has been deliberately suppressed to avoid triggering other checks.

Trial abuse via identity rotation is a variant of multi-account creation where the intent is specifically to exploit free trial or freemium onboarding. Different emails and IPs give each submission the appearance of a new user. The device fingerprint connecting them is the signal that makes the pattern visible. Without it, each trial sign-up looks legitimate in isolation.

CAPTCHA Plus Behavioral Monitoring: Complementary, Not Redundant

There is a framing error that surfaces frequently in fraud stack discussions: treating CAPTCHA and behavioral monitoring as competing approaches, where deploying one means the other is unnecessary. This is not how the layers work.

CAPTCHA challenges at a moment; behavioral monitoring watches the whole session. They are evaluating different things at different points in time. Used together, CAPTCHA filters the obvious population of unsophisticated scripted bots - those that cannot produce a convincing interaction at the challenge moment - while behavioral monitoring examines the full session context of everything that passes through it. The two layers are additive, not substitutes.

What CAPTCHA does well, it does efficiently. For teams already running reCAPTCHA v3 or Cloudflare Turnstile, those signals contribute a genuine first-pass filter. The mistake is treating that filter as complete coverage rather than as the first pass it actually is.

Where behavioral monitoring adds depth is in the session as a whole: the signals before the challenge, the signals after it, and the device context that CAPTCHA's challenge mechanism does not evaluate at all. A session that passes the CAPTCHA challenge with a high confidence score but exhibits robotic typing cadence, instant field population, and a device fingerprint that appeared on nineteen previous submissions this week is not producing a clean signal. It is producing a CAPTCHA pass alongside multiple behavioral anomalies.

Honest framing: CAPTCHA is a useful entry point for Layer 1. It is not a complete implementation of it. The teams that discover this distinction after the fact - after fake leads have contaminated a pipeline, after trial credits have been exhausted by the same device across fifty accounts - are the ones who have learned that Layer 1 is not synonymous with CAPTCHA, and that the session contains evidence that CAPTCHA was never designed to surface.

How Opportify Captures the Full Interaction and Session Layer

Opportify Fraud Protection is instrumented at Layer 1 from the moment your page loads. A single JavaScript snippet, added once to your form page, initializes a session monitor silently on page load with no visible effect on the user experience.

From initialization to submission, the snippet observes behavioral signals: typing cadence across every field, copy-paste events, cursor movement and path, field focus and exit timing, scroll events, form progression pattern, and overall session timing. These signals do not interrupt the user's interaction. They accumulate in the background.

On submission, the behavioral profile of the session is attached to the submission record. Fraud Protection evaluates it alongside identifier-level signals from Layer 2 - email quality, IP reputation, phone number signals - producing a unified risk score that reflects both the session behavior that produced the submission and the quality of the identifiers submitted. A submission can score high risk because its behavioral signals are anomalous, because its identifiers are poor quality, or because both layers are producing signals that align.

The output is a risk score with explainable signals. Which signals fired, how they were weighted, and why the score landed where it did. Your team sees the reasoning, not just a number. The decision on what to do with a high-risk submission - flag it, route it to manual review, require additional verification, or accept it - is always yours. Fraud Protection provides the signal. The action is with your team.

No backend changes are required. No additional infrastructure. One script, added once, starts producing session-level signals on the next form submission.

If your fraud stack currently ends at the network layer - a WAF, rate limits, and CAPTCHA - Layer 1 is the next logical gap to close. It is where the signals that your network defense cannot see first become readable. And it is where a significant portion of the fraud that passes through your funnel undetected is already producing detectable evidence.

Start your free Fraud Protection trial (no credit card required)

Key Takeaways

- Layer 1 is where the session lives. Every form submission is the end of a session full of behavioral evidence. Most fraud stacks discard that evidence entirely - only the submitted record is evaluated.

- CAPTCHA evaluates one moment. Challenge-based and behavioral CAPTCHA both operate at a single point in the session. Once the challenge passes, observation ends. Everything before and after it goes unexamined.

- Continuous behavioral monitoring covers the whole session. Typing cadence, copy-paste behavior, micro-interactions, form progression anomalies, and session timing are signals that CAPTCHA cannot access and that only continuous session monitoring can surface.

- Device fingerprinting exposes multi-submission patterns. The same device appearing across multiple identities is invisible to per-submission checks. Only a persistent fingerprint component can connect submissions that are individually designed to appear independent.

- CAPTCHA and behavioral monitoring are additive. CAPTCHA filters unsophisticated bots at the challenge moment. Behavioral monitoring catches what passes it. Deploying both produces more coverage than either alone.

- Layer 1 has its own blind spot. Behavioral and device signals describe how a form was filled and by what device. They do not evaluate the quality or legitimacy of the identifiers submitted - the email address, IP, and phone number. That is Layer 2: Input and Signal Intelligence.

Continue to Layer 2: Input and Signal Intelligence (coming soon).

Part of the Identifier Trust Layers series by Opportify. Previous: Layer 0: Traffic Filtering and Network Defense. Related reading: CAPTCHA Is Dead: What Replaces It.