Layer 0: Your Network Defense Catches What It Can See. Here Is What It Cannot.

This is Post 1 in the Identifier Trust Layers series by Opportify, a framework that maps where trust can be evaluated across the full user lifecycle, and where the gaps are. Each post covers one layer in depth.

The Checkpoint That Fires Before Anyone Touches Your Form

Every request that reaches your application passes through a network layer first. Before a user has loaded your page. Before they have moved a cursor or entered a single character. Before any identifier has been submitted.

This is Layer 0 in Opportify's Identifier Trust Layers framework: the network and traffic filtering layer. It is the earliest point in the user lifecycle where any evaluation of risk is possible, and it operates entirely on network-level signals.

Layer 0 (Traffic Filtering and Network Defense) is the earliest stage in Opportify's Identifier Trust Layers framework: it evaluates risk at the network level before any user interaction occurs, using IP reputation, rate patterns, and WAF rules.

The Identifier Trust Layers is Opportify's original framework for mapping fraud coverage across the user lifecycle. It is not an industry standard or third-party model.

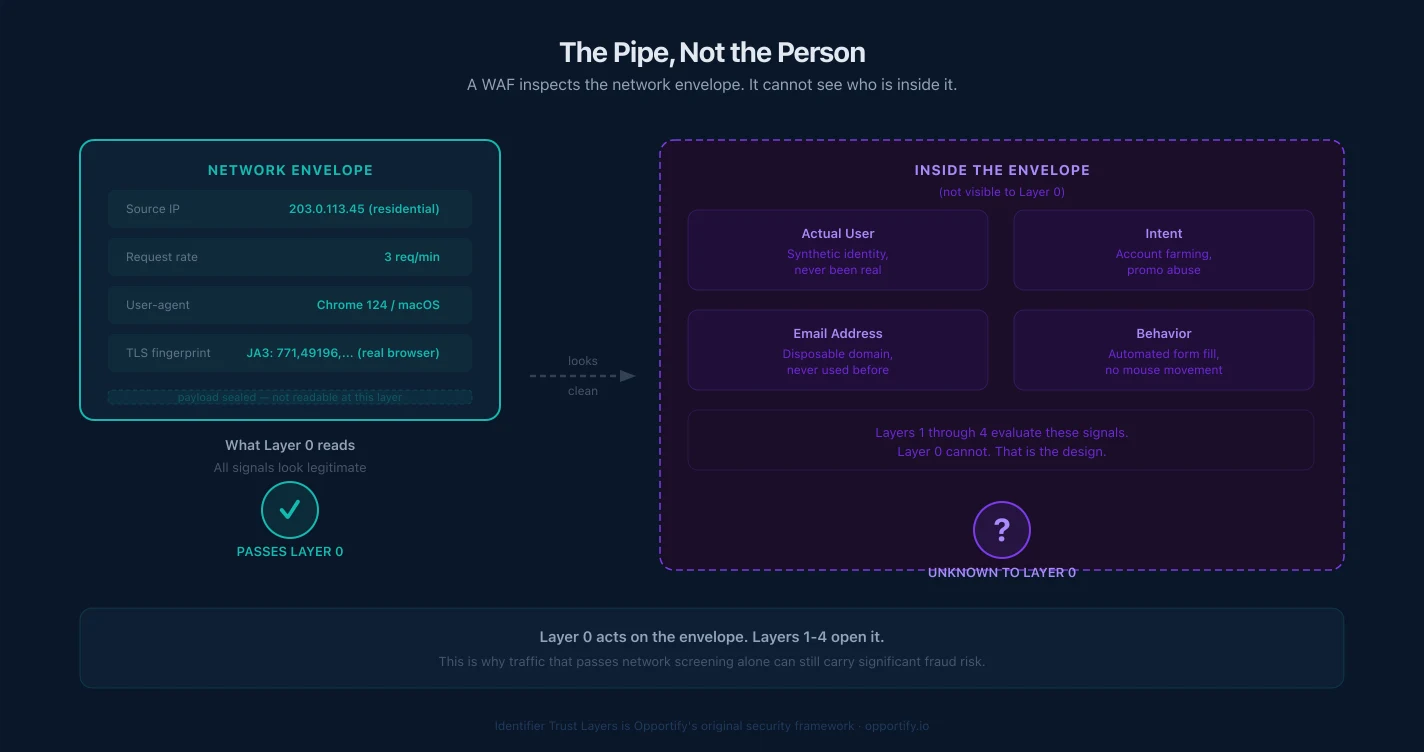

What that means in practice: Layer 0 cannot see behavior, cannot evaluate the quality of data a user will submit, and has no view of intent. It sees the pipe: the origin of a request, the structure of the traffic, the rate at which requests arrive, and whether the source matches known threat intelligence.

That is a meaningful set of capabilities. And it is also a precise boundary.

Understanding what Layer 0 covers, and where that coverage ends, is the foundation for building a fraud prevention stack that actually closes gaps rather than creating a false sense of protection at the edge.

What Network-Level Defense Actually Catches

Network-level defenses have matured significantly. Cloudflare, AWS WAF, Google Cloud Armor, and their equivalents provide real, production-tested protection. Here is what they are genuinely effective at catching:

IP Blocklisting and Reputation Filtering

Threat intelligence feeds maintain constantly updated lists of known malicious IPs: Tor exit nodes, datacenter ranges associated with scanning and abuse, high-risk ASNs, IPs tied to historical botnets or credential stuffing campaigns.

WAF and CDN providers consume these feeds and block or challenge matching traffic before it reaches your application server. This is effective against attackers who have not rotated their infrastructure. A botnet operating from known datacenter ranges, an attacker reusing a previously flagged IP, or traffic arriving from a Tor exit node: all of these produce a clear network signal that blocklisting addresses.

The coverage is real. So is the limit: it depends entirely on the attacker's infrastructure being on a list.

Rate Limiting

Volumetric attacks (credential stuffing floods, brute-force attempts, high-frequency scraping) produce detectable traffic patterns. Rate limiting cuts them off at thresholds: too many requests from a single IP in a given time window, too many requests to a specific endpoint, too many failed authentication attempts.

This is effective against unsophisticated automation. Large-scale, undisciplined bot traffic that hammers endpoints in bursts is exactly what rate limiting is designed to catch.

WAF Rules: Known Attack Signatures

Web Application Firewalls inspect request headers and payloads for known attack patterns: SQL injection strings, cross-site scripting payloads, malformed headers, path traversal attempts. These signatures represent decades of accumulated knowledge about how attacks are structured.

WAF rules are effective against attacks that rely on payload-level exploitation, and completely irrelevant against fraud that arrives as structurally valid, well-formed requests. A form submission containing a fake name and a disposable email address produces no WAF alert. There is nothing structurally wrong with the request.

CDN-Level Bot Fingerprinting

CDN providers with dedicated bot management tiers (Cloudflare Bot Management, Imperva, and similar platforms) can layer behavioral heuristics on top of IP reputation to identify known automation frameworks by their network signatures: specific TLS fingerprints, HTTP header ordering, missing browser-initiated headers, request timing inconsistencies. This capability requires enterprise-tier configuration and is not part of a standard WAF or CDN setup. Where it is deployed, headless browsers running without customization, simplistic scrapers, and commodity bots often have recognizable patterns at this level.

This adds meaningful coverage against unsophisticated automation. It does not address tooling that is explicitly designed to mimic legitimate browser behavior at the network level.

The Boundary: Where the Network Layer Ends

Layer 0 has a structural constraint that is important to name clearly: it evaluates requests before any user interaction occurs. That boundary is also precisely where its coverage ends.

The network layer cannot evaluate what a user does once they have access. It cannot observe how a form is filled, how long a user spent on a page, whether their typing pattern looks human, or whether their device has been seen before. Those are interaction and session signals that become readable only after a user has loaded a page and started engaging with it. That is Layer 1 territory.

More fundamentally, the network layer evaluates the pipe, not the person.

A residential IP from a legitimate ISP passes every network filter, regardless of the intent of the person using it. A mobile carrier IP producing a single, well-paced request produces no anomaly that any rate limit or reputation feed would flag. These are the same signals that millions of legitimate users generate.

This is not a flaw in how WAFs or CDNs are built. It is a structural property of what the network layer can see: infrastructure signals, traffic patterns, known threat fingerprints. The moment you need to evaluate who is on the other end of the connection, you have crossed into a domain the network layer was never designed to address.

One common point of confusion worth addressing here: CAPTCHA is not a Layer 0 mechanism. CAPTCHA is an interaction-based challenge: it requires the user to be present, to load a page, and to respond. That places it squarely in Layer 1, which covers interaction and session intelligence. We cover it in depth in the next post.

What Does a WAF Fail to Catch?

This is where network-level coverage has concrete consequences for fraud teams. The traffic that clears Layer 0 is not safe. It is simply traffic that carries no network-level signal of risk.

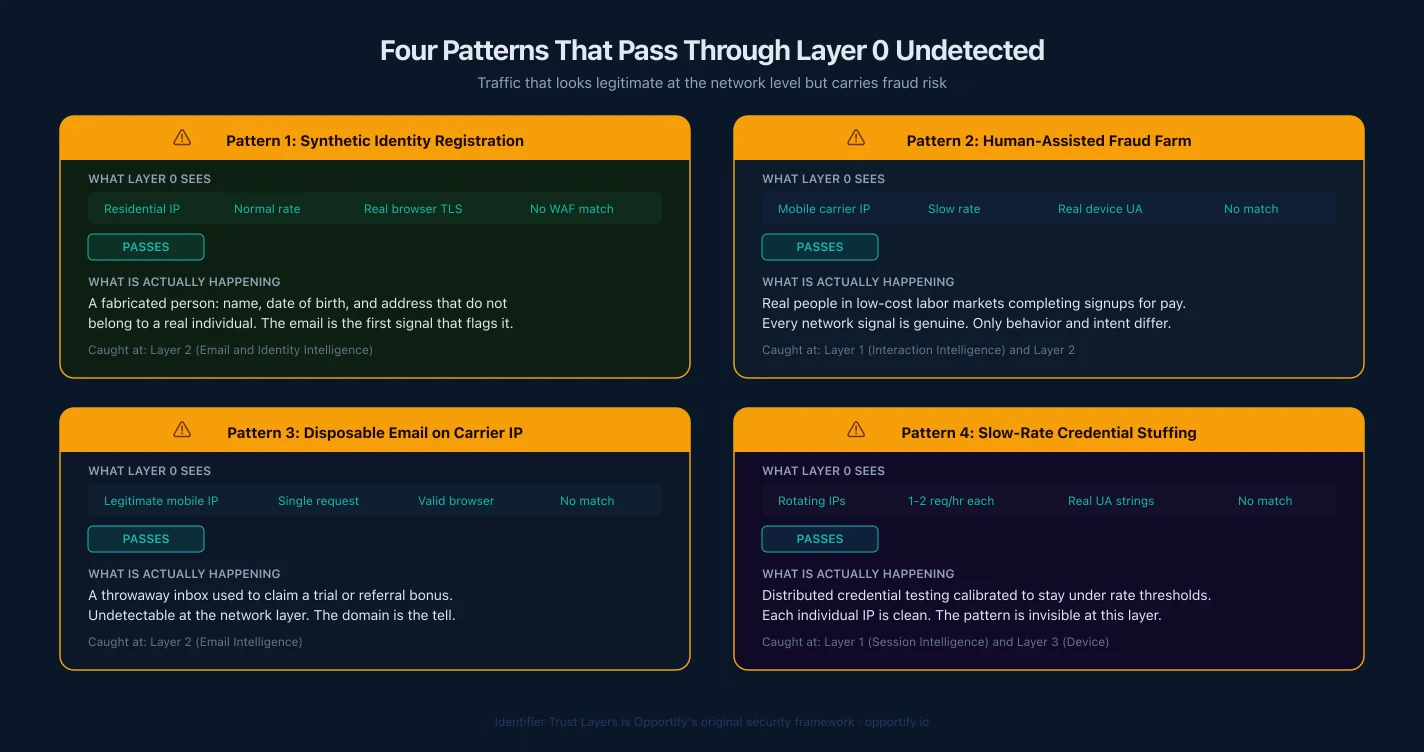

A real browser from a residential IP submitting a synthetic identity. The request looks identical to a legitimate sign-up. Clean IP, standard browser headers, reasonable request rate. The synthetic identity (a fake name, a plausible email address on a domain with a working MX record, a valid-format phone number) generates no network anomaly. It passes through completely unexamined.

A human-assisted fraud operation. Real people, using real devices, on real residential networks, filling out forms manually. No WAF rule fires. No rate limit triggers. No bot fingerprint matches. These operations are specifically designed to produce traffic that is indistinguishable from legitimate users at the network level, because it is produced by actual humans.

A disposable email submitted at a normal pace from a mobile carrier IP. Mobile carrier ranges are high-volume, legitimate-looking sources. A single submission from a mobile IP at a human typing pace produces nothing that any Layer 0 tool would flag. The email address (pointing to a disposable domain with a functioning-but-temporary mailbox) is invisible to the network layer. Its risk lives in the identifier, not the infrastructure.

Slow-rate attacks calibrated to stay under thresholds. Rate limiting works on volume. An attacker who submits one request every 90 seconds, distributed across rotating residential proxies, never crosses a threshold. The attack is invisible to rate-limit logic because it was designed to look like low-frequency legitimate traffic.

These are not theoretical edge cases. They represent the majority of modern fraud reaching sign-up flows, trial pipelines, and onboarding forms. None of them produce a network-layer signal.

Why WAF Limitations Create a False Sense of Security

The danger with strong network-level defenses is not that they fail. It is that they succeed visibly, and that success is easy to measure and report.

Blocked requests per day. Flagged IPs per week. Attack traffic percentage. These are real numbers, and they represent real work that the network layer is doing. Security teams are not wrong to track them.

The problem is that measuring protection by what you catch tells you nothing about what slips through.

Everything that passes the network layer reaches your application (your forms, your sessions, your submission pipeline) with zero prior evaluation of behavior or identifier quality. There is no signal attached to it saying "this was examined and found clean." It just arrived. The network layer checked what it could check, and the rest is invisible to it.

CRM pollution, fake onboarding records, wasted KYC spend, pipeline contamination: these are downstream consequences of fraud that had no network-level signature. They did not slip through a gap in your WAF configuration. They passed through exactly as intended, because the network layer was never designed to catch them.

Teams that rely primarily on Layer 0 protection often discover fraud in the wrong place: after a sales team has worked a fake lead, after a verification budget has been spent on synthetic identities, after a fraud pattern has run long enough to show up in retrospective analytics.

The network layer is the right tool for network-level threats. It is not a substitute for evaluating the signals that only become readable after a user starts interacting with your product.

What Comes Next: The Interaction Layer

The moment a user loads your page and starts interacting, a new set of signals becomes available. How they move through a form. How their device behaves. The timing and rhythm of their input. Whether their session exhibits patterns consistent with automation or inconsistent with the identity they are claiming.

These are Layer 1 signals: interaction and session intelligence. They are completely invisible to the network layer, and they are where a significant portion of modern fraud becomes detectable.

Layer 1 is also where CAPTCHA mechanisms operate, covering both traditional challenge-response CAPTCHAs and newer passive behavioral challenge systems. Their effectiveness, their limitations, and the specific fraud patterns they miss are covered in the next post in this series.

Opportify Fraud Protection operates at Layers 1 and 2 of the Identifier Trust Layers framework, covering the gap between network defense and identity verification. It evaluates behavioral signals during the session, and identifier signals at the moment of submission, across 100+ signals per submission. The output is a risk score with explainable signals. The decision on how to act is always with your team.

No backend rewrite required. A single script is all it takes to start seeing what passes through the network layer undetected.

Continue to Layer 1: Interaction and Session Intelligence

Part of the Identifier Trust Layers series by Opportify. Related reading: WAF and CAPTCHA Alone Cannot Protect Modern Funnels.